Your metrics

look IMPRESSIVE!

oh, wow!

(Such a shame, you don’t trust the data behind them!)

(the trust issues, not the impressiveness)

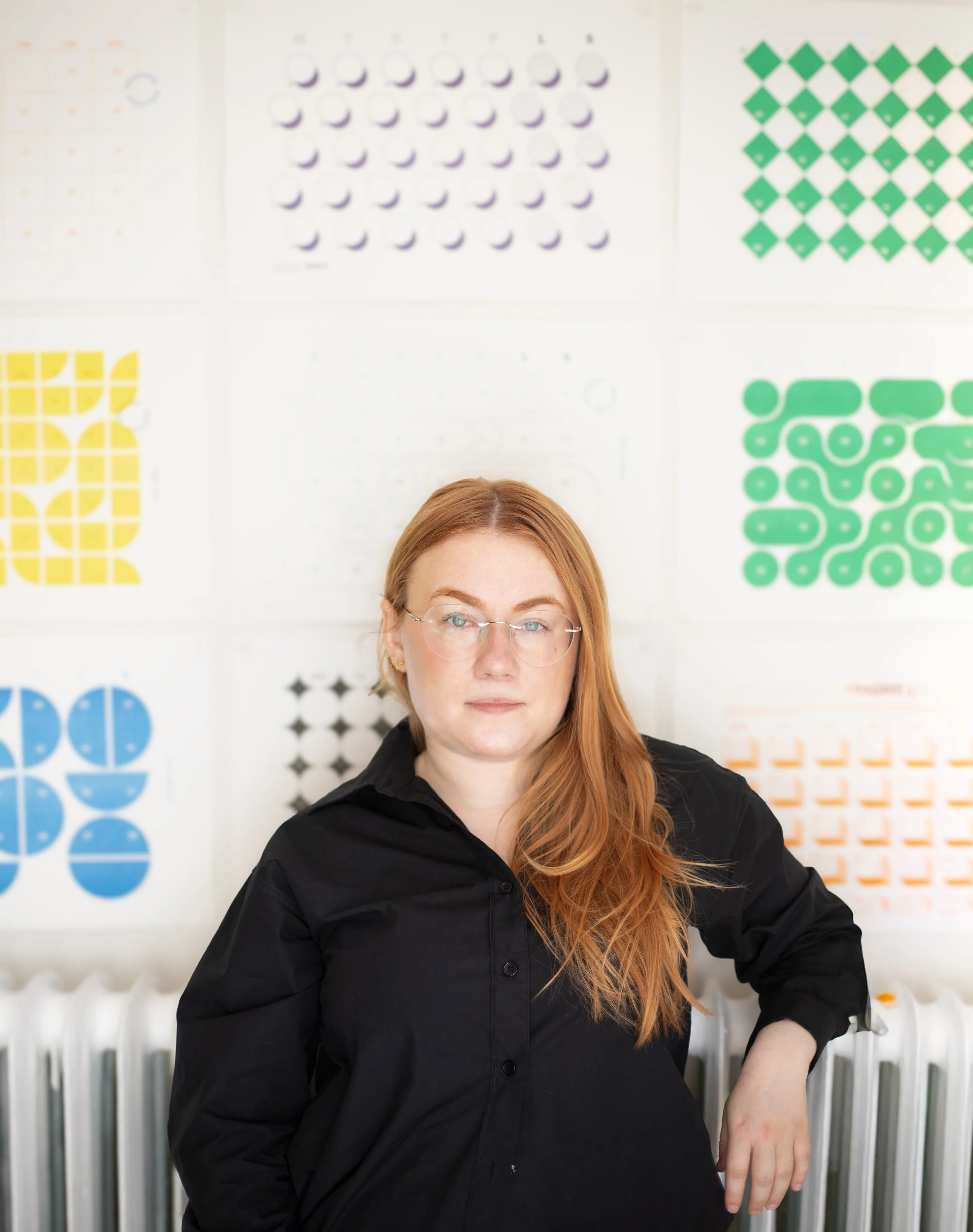

But first, let me introduce myself:

I'm Kristina,

and I’VE SPENT the last DECADE FIXING DATA my clients DIDN'T KNOW WAS BROKEN.

From streaming platforms, to FinTech, to healthcare, to Mars (yep, the planet) — I’ve helped identify anomalies, and find solutions to difficult problems.

And, well, yes — helped expand the music taste of hundreds of thousands of people along the way.

(add a point for every statement you find relatable)

How to tell you need me?

You want to be data-driven

But the only thing that data is driving is — you, insane.

You're planning for AI-implementation.

And your data quality is not there — brave!

Your dashboards look great.

And none of them match!

You do A LOT of A/B* testing

*B as in “bias”

You're trying to understand drop-off in the flow.

Main suspects are several events, all with the same name.

Scored more than 0? Let’s talk about how to get you back to square 1, and do it right this time. book a 30-minute call.

But what do I actually do for the companies?

We start with the holy triage:

Audit decision-making process and KPI definitions

Reconciliate schemas, streamline data pipelines across sources

Standardise event tracking

2. Move on to all things money

Disentangle correlation from causation when decisions depend on it

Ensure analytical results hold up outside the spreadsheets

Combine descriptive analytics, causal inference, and predictive modeling to explain past behavior, and predict future outcomes

safeguard big and small business changes with Properly powered A/B tests

3. Support the further growth through good experiments

If we're being rigorous — and we are — proper experiment design accounts for each of the following:

estimand-first design • well-defined exposure and assignment • correct randomization granularity • stratified sampling and covariate balance • intent-to-treat vs. per-protocol framing • ex-ante power / MDE calculation • CUPED • multiple testing correction • spillovers and cannibalization • interference and network effects • novelty and primacy effects • survivorship bias in cohort selection • sequential monitoring with predefined stopping boundaries • Bayesian stopping rules • cluster-robust and heteroskedastic inference • regression discontinuity and diff-in-diff • metric invariance and guardrail validation • analysis plans fixed prior to readout • reproducible pipelines and versioned outputs • decisions mapped to posterior / test outcomes

So, Do not worry:

I can design you a textbook-perfect experiment.

Chances are, though, you don't have textbook-perfect conditions.

What you do have — is a real product, real timelines, a team that needs answers by Thursday, and a sample size that won't magically double.

So we design experiments that actually work — for your org, your scale, your constraints. The right methodology for the question you're actually asking, not the one that looks best on paper.

TRUE Sophistication — is knowing

when not to be sophisticated.

4. Then — we’ll talk about the thing

& go through the AI-READINESS checklist:

❑ honest assessment: do you actually need ML/AI, or will you do better with proper regression?

❑ reality-check on real LLM use cases vs. hype chasing

❑ getting infrastructure ready to support the real magic AI can deliver if you let it.

❑ data quality & alignment audit, before you invest in models

5. Finally, we’ll throw some light on the true state of your:

data invisibility

inaccurate attribution

team misalignment

irrational decision making

data illiteracy

unreliable dashboards

non-scalable infrastructure

disorganised pipelines

overfitted predictions

underpowered tests

redefined success criteria

knowledge discontinuity

Put your finger on it

Seeing the real picture is rarely as pleasant as we imagine it to be. And still, as in life, so in business, facing the reality head-first — is the only path to growth.